Some jobs are being automated, but we’ll need workers who can use their brains for a long time.

The opinion piece below is written by Mike Collins, a veteran of the manufacturing industry and the author of The Rise of Inequality and the Decline of The Middle Class. He can be reached via his website, mpcmgt.com.

It's the year of the “Rise of the Machines,” with headlines predicting that robots and artificial intelligence will eliminate millions of jobs in the near future (and eventually render humans superfluous).

One article in The New York Times, aptly titled Robocalypse Now?, reports that "the big minds of monetary policy were seriously discussing the risk that artificial intelligence would eliminate jobs on a scale that would dwarf previous waves of technological change." In another NYT article, venture capitalist and Artificial Intelligence Institute President Kai-fu Lee writes that we are about to see mass displacement of jobs due to the advancement of artificial intelligence.

These comments illustrate the fear and paranoia related to job elimination, and it is true that some jobs are being lost to advancements in technology. But I think all of these writers have missed the essential points — we will still need workers whose primary skill is their own mind.

I worked in the industrial automation business for 30 years and oversaw the installation of automatic machines and robots that replace workers in manual (and often back-breaking) jobs. Automation of production lines is not new and began in the 1950s.

But I want to make the case that there are definite limitations and barriers in technology that will limit how many jobs can be automated. The projections of millions of jobs being eliminated by the rise of the machines is simply hyperbole.

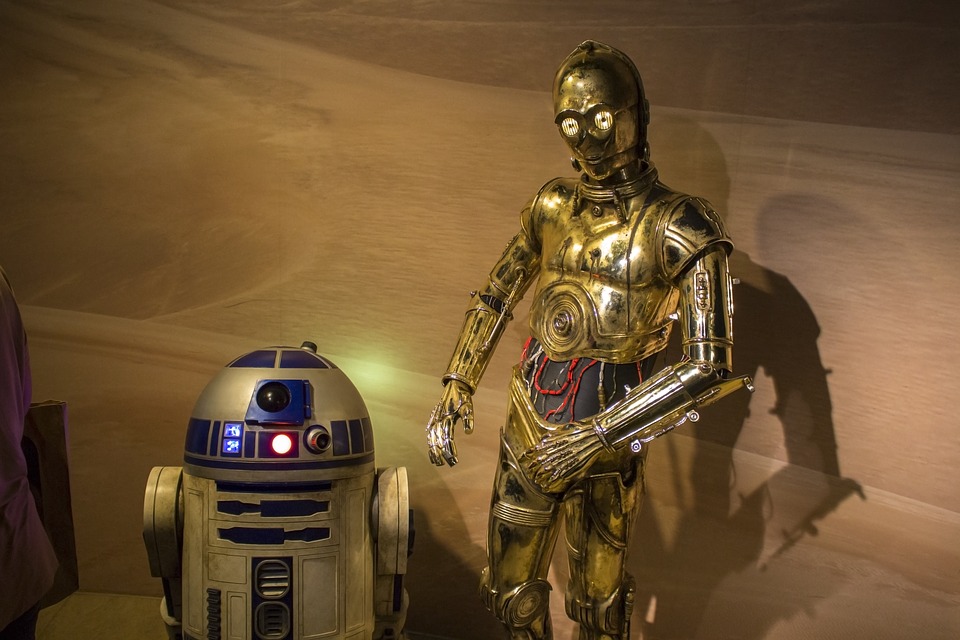

American manufacturing has come a long way in automating factories with robots and computers. These advances in technology — along with movies like the Terminator and the Star Wars sagas — have led to a lot of speculation about how far artificial intelligence can be developed. In the Terminator series, for example, an artificial defense network called Skynet becomes so advanced that machines become self-aware and cause an atomic war. The basic assumption is that microprocessors can become so sophisticated that they can think like humans.

You can see this idea in the titles of various articles in the last year:

“Intelligent robots threaten millions of jobs”

“The Internet Of Things, Robotic Manufacturing And The End of the World”

I was a general manager in a company that built material-handling robots, which replace people doing hard work stacking bags or boxes on pallets all day. Robots use digital controls much like your laptop. The design includes transistors (on/off switches); a central processing unit (CPU); some kind of operating system (like Windows); and is based on binary logic (instructions coded as 0s and 1s)

Computers are linear designs and have continually grown in terms of size, speed and capacity. In 1971, the first Intel microprocessor had 2,300 transistors, but by 2011 the Intel Pentium microprocessor had 2.3 billion transistors. The rise of supercomputers have led many people to think that we must be finally approaching the capabilities of the human brain in terms of speed and capability — and we must be on the verge of creating a C-3PO that can think and converse just like a human. But the truth is that every tiny movement of the robot is pre-programmed.

Robots are very good at replacing people in very repetitive jobs — think picking up boxes from a conveyor and placing them on the pallet. Robots are only going to replace people in the type of job that is very repetitive. But they are not likely to replace auto mechanics, who use insights from experience to find out what is wrong with the car.

The Human Brain

The human brain is not a digital computer design. It is some kind of analogue neural network that encodes information on a continuum. It does have its own important parts that are involved in the thinking process, but the way these parts communicate and operate is totally different from a digital computer.

Neurons are the real key to how the brain learns, thinks, perceives, stores memory, and a host of other functions. The average brain has at least 100 billion neurons, which are connected to axons, dendrites and glial cells that each have thousands of synapses that transmit signals via electro/chemical connections. It is the synapses that are most comparable to transistors because they turn off or on. Because each neuron contains axons and dendrites that have around 1,000 synapses, the total capability is 100 billion times 1,000 (compared to the 2.3 billion transistors in a pentium chip).

Each neuron is a living cell and a computer in its own right. A neuron has the signal processing power of thousands of man-made transistors. Unlike transistors, neurons can modify their synapses and modulate the frequency of their signals. Each neuron has the capability to communicate with 10,000 other neurons.

Unlike digital computers with fixed architecture, the brain can constantly re-wire its neurons to learn and adapt. Instead of programs, neural networks learn by doing and remembering and this vast network of connected neurons gives the brain excellent pattern recognition.

Limitations of Digital Computers

We have been so successful in continuously shrinking microprocessor circuits and adding more transistors year after year that people have begun to believe that we might actually equal the human brain. But, there are problems.

The first is that in digital computers, all calculations must pass through the CPU, which eventually slows down its program. The human brain doesn’t use a CPU and is much more efficient.

The second problem is the limitations to shrinking circuits. In 1971 when Intel introduced the 4004 microprocessor, it could hold 2,300 transistors that were about 10 microns wide. Today a pentium chip has transistors that are down to 22 nanometers wide (a nanometer is one billionth of a meter). Developers are currently working at 14 nanometers, and hope to reach 10 nanometers. The problem is that they are getting close to the size of a few atoms. There, developers will begin to run into problems of quantum physics that could end size reduction for digital computers.

The third problem is that in order to increase speed and capacity, computer scientists have had to add billions of transistors and hundreds of CPUs to build very large supercomputers. This seems like a very inefficient way to increase processing power compared to a 3.5-pound brain. Trying to emulate the human brain should require a total different approach.

Michio Kaku’s book The Future of the Mind shows that the latest IBM super computer (the Blue Gene/Q Sequoia) is capable of performing calculations at 20.1 trillion calculations per second. But operating at these speeds requires 7.9 megawatts of power. To build a supercomputer that would match the computing power of the human brain would take a thousand Blue Gene computers. As Kaku writes, “[t]he energy consumption would be so great that you would need a thousand-megawatt nuclear power plant to generate the electricity. And to cool this monster you would need to divert a river and send it through the computer circuits.”

To create enough intelligence to match up to the average 3.5 pound human brain would require a computer the size of small city.

There is a huge difference between robots with digital brains taking human jobs and robots that are conscious entities. At this point, we really don’t understand what constitutes intelligence, consciousness, or human cognition. We will need to really define these concepts to create a non-living machine that is self-aware.

Now I admit that current computers and software are replacing any job that is repetitive and can be reduced to 0s and 1s. Jobs like travel agents, word processors, typists, and bookkeepers have been eliminated, and the process is ongoing.

But replacing humans with a unit protocol droid like C-3PO is a long way off, and probably can’t be designed from our current computer designs using silicone and transistors. Perhaps a computer based on living cells or a machine that can operate at a quantum level using nanotechnology will be a possible answer. Or possibly in the distant future we can install a human brain in a robot.

Any fear you might have of robots becoming self-aware and taking your job in the near future, however, have been greatly exaggerated.